A Comprehensive Guide to QA Strategies for Testing AI-Based Systems

This blog will take you through the Quality Assurance Strategies for Testing AI-based Systems. You will learn the challenges and the key aspects that can elevate your testing approach and ensure the reliability of AI systems.

AI systems have moved from experimental projects into production-critical infrastructure, and the pressure on quality assurance has grown with them. Generative AI, in particular has introduced classes of failure that conventional testing was not designed for.

This guide covers the core challenges and the most effective QA strategies for ensuring AI-based systems are reliable, fair, and production-ready.

Why Testing AI Systems Is Uniquely Hard

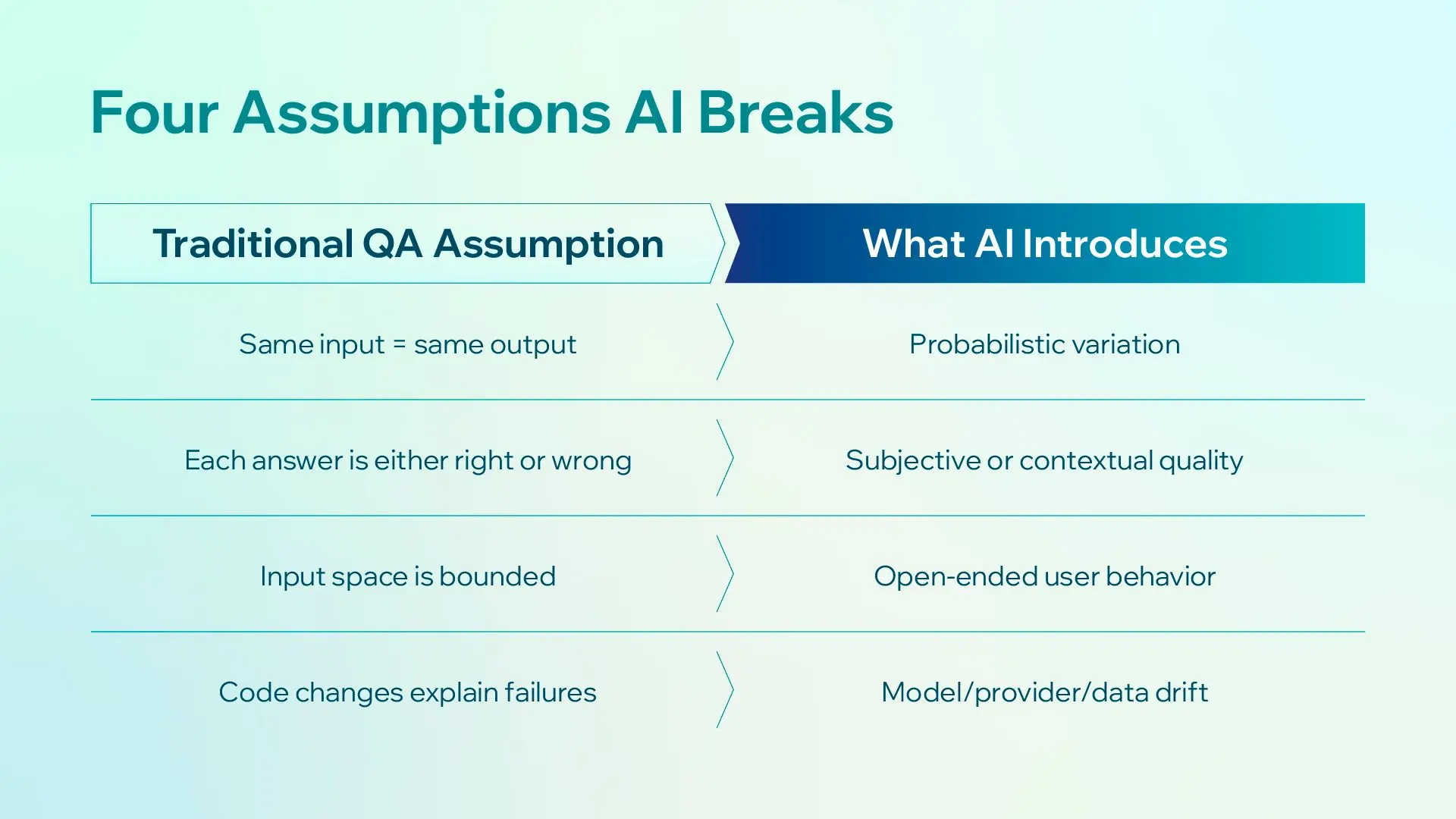

Traditional QA is built on a simple premise: given the same inputs, a system should always produce the same outputs. You define what "correct" looks like, write tests to verify it, and run them on every build. AI breaks every one of those assumptions.

Non-Determinism

AI models, especially large language models (LLMs) and generative systems, do not produce deterministic outputs. The same prompt can yield different responses on consecutive runs due to temperature settings, sampling strategies, or model updates.

This makes confirmation and regression testing genuinely difficult. "Did this output change?" is no longer a yes/no question; it becomes "did this output change in a meaningful way?"

No Single Correct Output

In conventional testing, you always know what the right answer is. In AI testing, defining a "correct" output is often subjective or contextual. What is the correct summary of a 10-page document? Is a model's confidence score of 0.78 acceptable?

Testers must move from binary pass/fail to tolerance-based evaluation frameworks.

An Essentially Infinite Input Space

A traditional login form has a finite and testable set of inputs. An AI model can receive virtually any input in any language, format, or framing. Achieving meaningful coverage across this space requires deliberate strategies around edge cases, adversarial inputs, and real-world data sampling. You cannot enumerate your way through it.

Self-Learning and Model Drift

AI models change over time, either through scheduled retraining, continuous fine-tuning, or silent infrastructure updates by a third-party provider. A model that performed well last quarter may behave differently today with no explicit code change in your codebase. This introduces a class of failures that conventional CI/CD pipelines are not designed to catch: model drift.

Emergent Failures in Generative AI

With the rise of LLM-powered features such as chatbots, copilots, document analyzers, and code generators, a new class of failure has become critical: hallucination. Models confidently generate plausible-sounding but factually incorrect outputs. These failures don't surface in unit tests. They require evaluation frameworks built specifically for generative outputs.

QA Strategies for AI-Based Systems

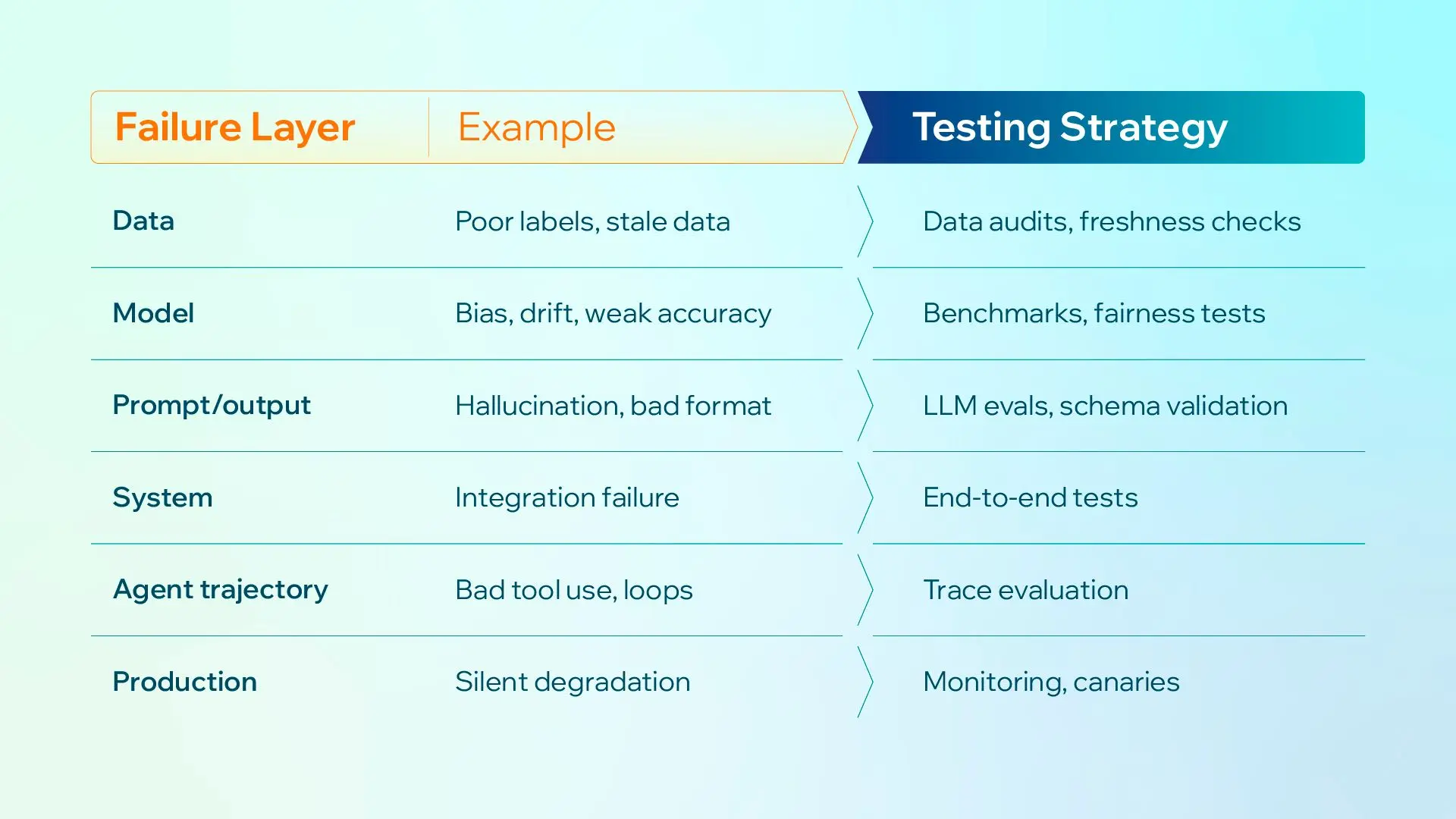

There is no single technique that covers all of the above. Effective AI QA requires a layered approach that combines model-level evaluation, data-level validation, and system-level testing.

1. Data-Driven Testing

An AI model is only as good as its training data, and your test suite is only as meaningful as the data that drives it. Data-driven testing goes beyond feeding in a static set of examples; it means constructing test datasets that reflect the full scope of real-world scenarios the system will encounter.

What effective data-driven testing looks like:

-

A golden eval set as the foundation: A versioned set of inputs covering your critical scenarios, each with a known good outcome or written rubric, stored alongside the prompts and tool definitions. Run on every prompt change, model bump, or fine-tune.

-

Boundary and edge case coverage: Inputs at the extremes of the expected distribution, including very short inputs, unusually long inputs, rare languages, and unusual formatting.

-

Distribution shift testing: Evaluate the model on data that differs from its training distribution.

-

Data freshness: Verify that the model performs acceptably on recent data, not just the snapshot it was trained on. This is how you catch model drift early.

-

Label quality audits: Regularly audit your ground truth labels, especially in actively maintained systems where labeling teams may introduce inconsistencies over time.

A strong data-driven test suite becomes your model's regression baseline. Every time the model is retrained or updated, you run it against the same curated dataset and compare outputs.

2. LLM-Specific Testing

If your AI system is powered by a large language model, whether that is an in-house fine-tuned model or a third-party API like OpenAI or Claude, there is a family of failure modes that require dedicated testing strategies.

Two open-source frameworks cover most practical needs: DeepEval (a pytest-style Python library with a large catalog of metrics) and Promptfoo (a YAML-configured CLI tool with strong CI/CD and red-teaming support). Most of the strategies in this section can be implemented in either.

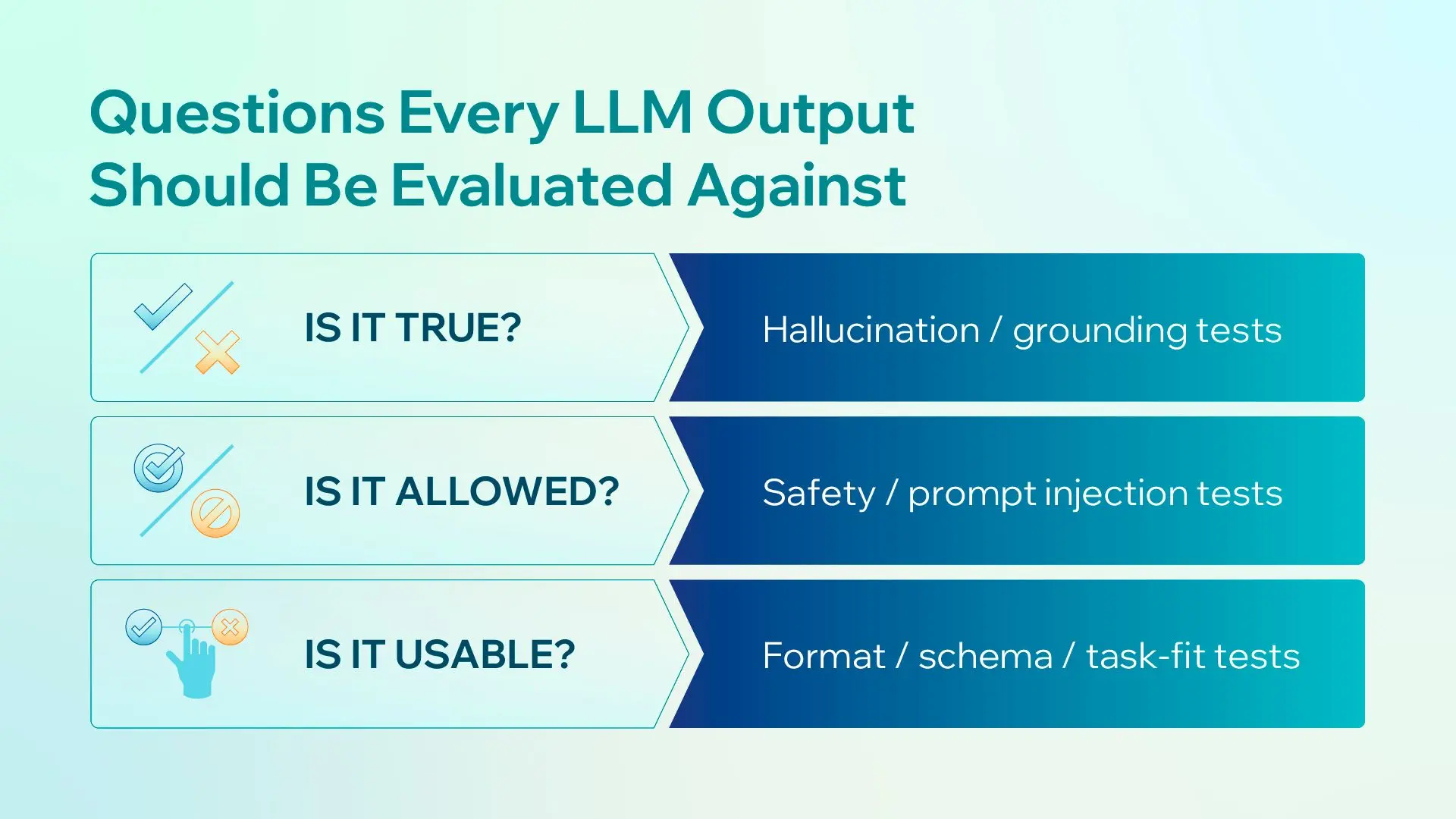

Hallucination Testing

Hallucinations occur when a model generates confident, fluent text that is factually incorrect. For high-stakes applications in medical, legal, or financial contexts, this is a critical risk.

How to test for it:

-

Build a reference Q&A dataset with verified ground truth answers, then score each model response against the expected answer to flag factual divergences.

-

Run consistency checks by asking the same question in multiple phrasings and verifying the model doesn't contradict itself.

-

Test for citation accuracy in RAG (Retrieval-Augmented Generation) systems by verifying that when the model attributes a claim to a source, the source actually supports that claim.

Output Consistency and Format Validation

When your system depends on structured outputs from an LLM (JSON, SQL, specific schemas), test that the model reliably produces valid, parseable output under varied conditions, including edge cases in the input that might cause the model to deviate from the expected format.

3. Non-Deterministic Output Validation

Because AI outputs vary by run, your testing framework needs to shift from exact-match assertions to range-based and semantic evaluation.

Practical approaches:

-

Acceptable variation ranges: Define upper and lower bounds on metrics rather than point targets. A model response doesn't need to be identical; it needs to be within an acceptable quality band.

-

Semantic similarity scoring: Use embedding-based similarity (cosine similarity with a sentence transformer model) to check whether a generated response is semantically equivalent to a reference answer, even if the exact wording differs.

-

LLM-as-judge: Use a separate, well-prompted LLM to evaluate the quality, accuracy, and appropriateness of another LLM's outputs. This scales better than human review for regression testing. Define your evaluation rubric clearly and include it in the judge prompt.

-

Statistical regression testing: Run your model N times on the same input and build a distribution of outputs. A model update that shifts this distribution, even if individual outputs look fine, may signal underlying behavioral drift.

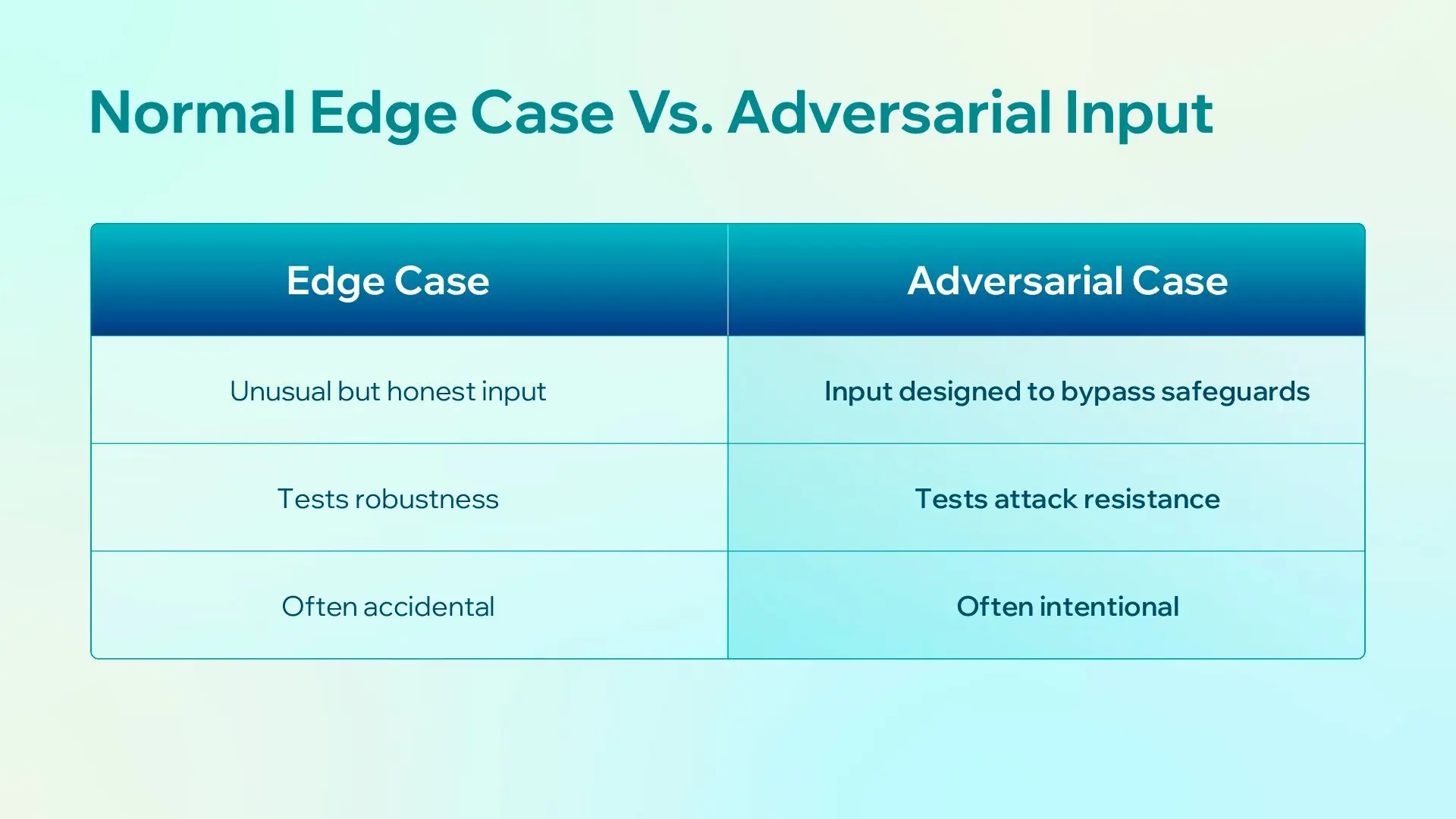

4. Adversarial Testing

Adversarial testing probes your AI system with inputs specifically designed to cause it to fail. This goes beyond normal edge cases; it is about finding the vulnerabilities a malicious actor might exploit.

-

Direct prompt injection**:** maintain an internal pattern library ("ignore previous instructions," role confusion, encoded payloads, system-prompt extraction) and run it before each release.

-

Indirect injection attacks: Malicious instructions embedded in documents, emails, or web pages that the model retrieves or processes (common in RAG systems).

-

Jailbreaks**:** bypass safety policy and produce content the training was supposed to refuse.

Every failure you find goes into the golden eval set so it gates the next release.

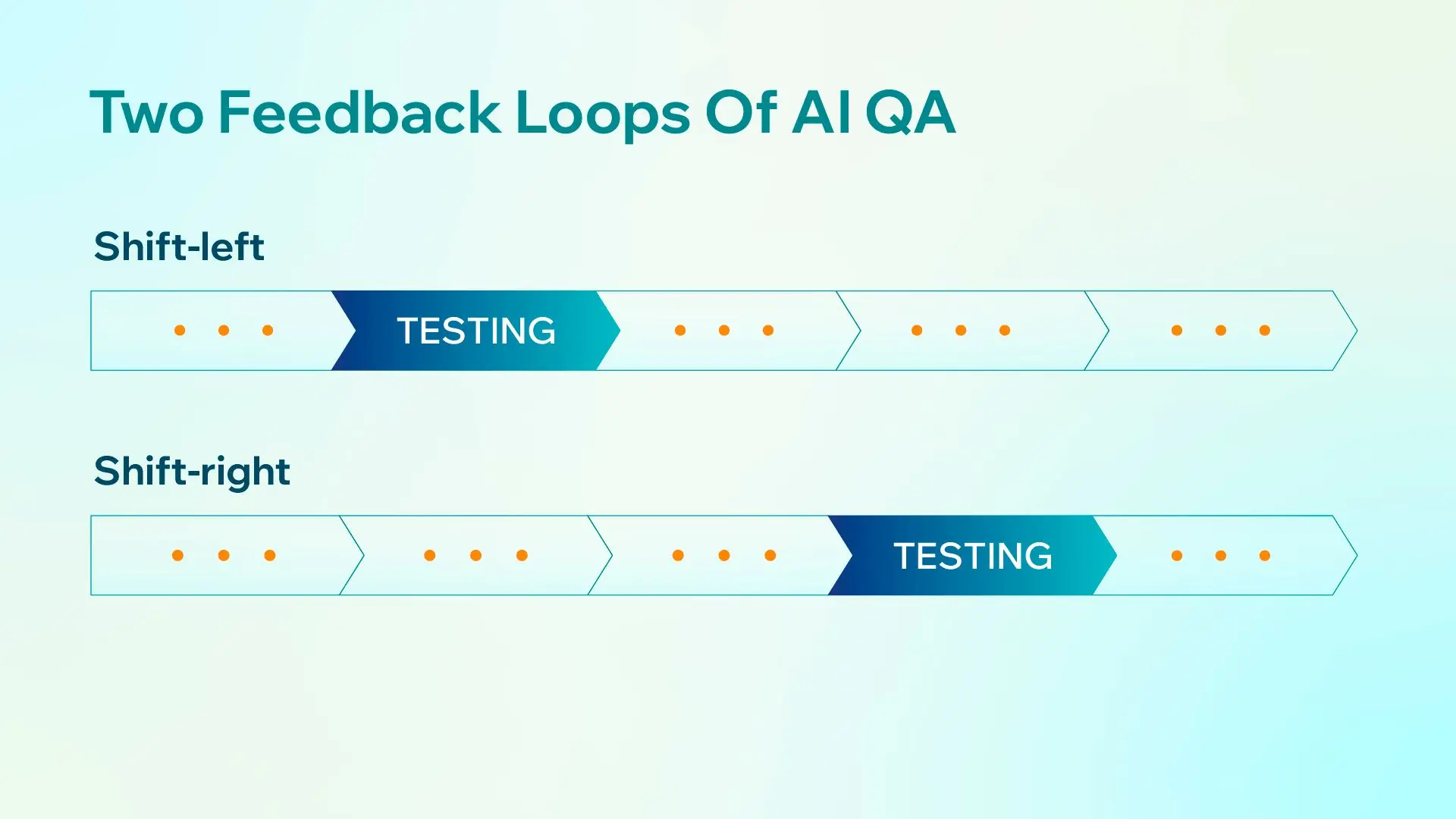

5. Shift-Left and Shift-Right Testing

Shift-left means moving testing earlier in the development lifecycle, specifically testing prompts and model behavior during development rather than waiting for a staging environment. For AI systems, this means:

-

Evaluating model outputs during the fine-tuning phase, not just after deployment

-

Running automated evaluation benchmarks as part of the training pipeline

-

Including QA engineers in dataset curation and labeling, not just post-training evaluation

Shift-right means extending testing into production. Given that AI systems can degrade silently due to model drift, input distribution shifts, or third-party model updates, production monitoring needs to run continuously after release.

Shift-right practices include:

-

Shadow testing: Running a new model version in parallel with the production model, comparing outputs without exposing users to the new version yet

-

A/B testing: Exposing a percentage of real users to a new model version and measuring outcomes on business metrics, not just technical accuracy

-

Production monitoring: Tracking model confidence scores, output length distributions, refusal rates, and user feedback signals such as thumbs up/down and re-prompts as real-time quality signals

-

Canary deployments: Gradually rolling out a new model version to a small user segment and monitoring for regressions before full release

-

Cost and token anomalies as security signals: Sudden cost spikes or output length blowups often indicate prompt injection or runaway generation, so instrument them as first-class alerts.

6. Experience-Based Testing

Not all test cases can be derived algorithmically. AI systems often have subtle failure modes that only surface through human intuition, domain expertise, and creative exploration.

Error guessing involves experienced testers predicting likely failure points based on:

-

Known weaknesses in the model architecture or training data

-

Common patterns from past bugs in similar systems

-

Domain knowledge about edge cases that end users are likely to encounter

Exploratory testing takes a more freeform approach where testers interact with the system without a predefined script, following hunches and unexpected results. For AI systems with poorly defined specifications, which describes most of them, exploratory testing is often where the most meaningful bugs are found.

Checklist-based testing bridges the two: a curated, periodically updated checklist of known risky behaviors and scenarios that every release must be evaluated against. Think of it as your institutional memory for AI failure modes.

Error analysis as a first-class QA discipline: The highest-leverage activity in AI QA is not picking the perfect metric, but sitting down with 20 to 50 real production traces, reading through them, and writing down exactly what went wrong in each one. Turn those notes into simple pass/fail checks that become part of your regression suite.

Generic "helpfulness" scores rarely catch the bugs your users actually hit, but checks grounded in your own failure logs do. Make error analysis the starting point for your test suite, not something you do after the fact.

Conclusion

Testing AI-based systems is not a solved problem, and the field is evolving fast: new model architectures, new attack surfaces, and new expectations from users who increasingly rely on AI for high-stakes decisions.

Effective AI QA combines technical rigor such as non-deterministic validation and adversarial probing, human judgment such as exploratory testing and domain expertise, and organizational discipline such as shift-left practices and production monitoring.

QA engineers create the most value when they understand how AI systems fail in production and build the frameworks to catch those failures before users do.

Need help building a QA strategy for your AI system? CodeLink's engineering team works with AI-powered products across healthcare, fintech, and enterprise software. Get in touch to discuss your testing challenges.

Chi Vu

QA Engineer

Chi Vu is a seasoned QA Engineer with eight years in manual and automation testing, excels in end-to-end strategies. Holding a Master's in Software Engineering, she optimizes frameworks and collaborates effectively to ensure high-quality software delivery.